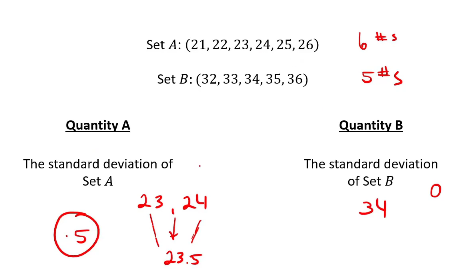

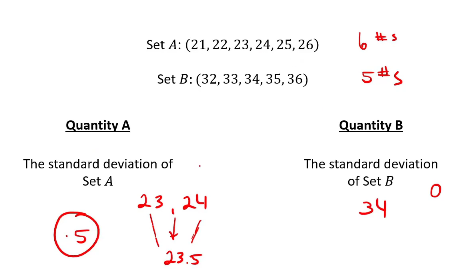

I’m having trouble understanding this problem as I was under the impression that std. deviation is the distance from the mean to the nearest numbers so in case of 32,33,34,35,36 shouldn’t the std. deviation be 1

I’m having trouble understanding this problem as I was under the impression that std. deviation is the distance from the mean to the nearest numbers so in case of 32,33,34,35,36 shouldn’t the std. deviation be 1

SD is more complicate than what you think! But you don’t need to know the formula for the test. Your basic conception, however, is right. “How far you are from the mean”.

If you had a sequence contains 3 integers, such as: (22, 23, 24) or ( 34,35,36), in both cases your SD would be 1

But the more numbers like those, in a sequence, you have, the bigger your SD would be.

I have no idea why SD for set B is 0?! Mathematically speaking Set A’s SD is 1.8 ish and Set B’s SD is 1.5 ish

The answer is still A

Can you send me the video please?